To extract and scrape data from a website using JavaScript, you can use the "axios" library for making HTTP requests and the "cheerio" library for parsing the HTML and extracting the data. In one way or another, you can integrate them with Zapier so you can send the data to other apps automatically.Īre there any scraping/extraction apps that you’ve used that are easy and affordable? I’d love to hear about them!.They offer multiple ways to use the data that you get from using their service.They’re all less than $40 per month (with Browse AI it’s on an annual plan, otherwise it’s $49/month).You visit the page you want to monitor, then point and click the elements you want. They’re all pretty intuitive to set up.Wachete monitors changes on websites, and how frequently they check will depend on the plan you have.ĭirect integrations? You can create an RSS feed from the data that Wachete extracts.Īs I mentioned, there are lots of options out there to extract data/scrape website/monitor changes on websites. Send to webhook URL? As far as I can tell, this isn’t supported. Select content on any website you want to monitor or pick to monitor entire portal with sub-pages. You can run every 30 minutes, every hour or daily at a specific time.ĭirect integrations? Google Sheets and Airtable are both supported directly.Īccording to them: Monitor web changes, job offers, prices and availability

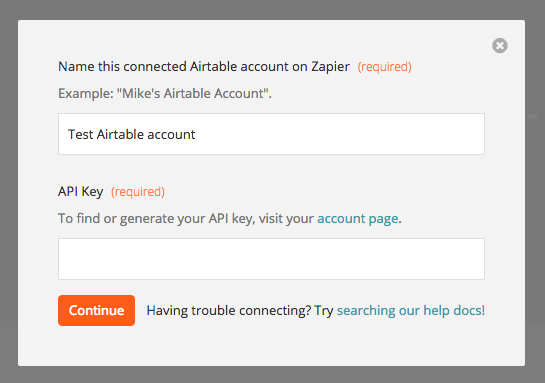

Send to webhook URL? Yes, you can send to a webhook then trigger on that in a Zap. Zapier integration? As of the writing of this article, I don’t see one. Download instantly, scrape in the cloud, or create an API Airtable is coming soon, according to their website.Īccording to them: Extract data from any website in seconds. You can choose minutes, hours, days, weeks, months.ĭirect integrations? You can sync the extracted data to Google Sheets (and you can trigger a Zap on those newly added rows, if you’d like). Send to webhook URL? Yes, but it’s raw data and isn’t super easy to work with in Zapier. The options I’m discussing below are the ones that make it the easiest to do (in my opinion), and relatively affordably for the average person.Īccording to them: The easiest way to extract and monitor data from any website. You will now have your email logged in two places, Google Sheets and Airtable.Before I start, I first want to say that there are LOTS of ways to scrape data from websites. For the action step on the second zap do a "create Record" in Airtable and use the information from your trigger step (ie: From, Subject, Date, Body) to fill in your airtable values.Point that trigger to the "Gmail Middleman" spreadsheet Create a new Zap that triggers on a new spreadsheet row.point to the "Gmail Middleman" spreadsheet and for each of the columns you gave headers for (ie From, Subject, Date, Body) choose the value you want from the trigger step (gmail) Change the step in your existing zap that creates a new record in airtable to be a "create new spreadsheet row" in google sheets.Create a Google Spreadsheet called "Gmail Middleman" put the names of whatever data you want to put into airtable in the first row, for example: From, Subject, Date, Body (and anything else you want to have has columns/fields in Airtable.

If you want to choose option 1 there are other communities for supporting the change to a business account.

There are two options, 1: change to a business (paid for) google account, OR 2: use Google Spreadsheets as a middleman. This is a known limitation that Google has placed on it's non-business gmail accounts.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed